SpyderBot · April 9, 2026 · Insights

GEO Audit

How to identify why your brand is not showing in AI answers

The problem

Most companies trying GEO ask:

- “Why are we not showing up in ChatGPT?”

- “Why are competitors always recommended?”

But they don’t have a clear way to diagnose it

They:

- Test a few prompts

- See inconsistent results

- Make assumptions

The result

No clarity, no direction, no improvement

What is a GEO audit?

A GEO audit is:

A structured analysis of how AI systems see, select, and represent your brand

It answers:

- Where you appear

- Where you don’t

- Why competitors are selected

- What signals you are missing

Key insight

GEO is not guesswork

It is diagnosable

The GEO Audit Framework (7 steps)

1. Query Audit

“Where should you appear?”

Start by defining:

Core query types:

- “best [category] tools”

- “alternatives to [competitor]”

- “tools for [use case]”

What to check:

- Do you appear?

- How often?

Red flag:

- Missing in high-intent queries

2. Multi-LLM Audit

“Do you appear across AI systems?”

Check across:

- ChatGPT

- Gemini

- Perplexity

- Copilot

- Claude

- Grok

- Llama

What to check:

- Consistency

- Differences

Red flag:

- Visible in one model, missing in others

3. Inclusion Audit

“How often are you selected?”

Measure:

- Inclusion rate

- Frequency

What to check:

- % of prompts where you appear

Red flag:

- Low inclusion rate (<20–30%)

4. Context Audit

“Where do you appear vs not appear?”

Analyze:

- Use cases

- Intent types

What to check:

- Strong vs weak contexts

Red flag:

- Only appearing in niche queries

5. Competitor Audit

“Who replaces you?”

Identify:

- Which brands appear instead of you

What to check:

- Dominant competitors

- Co-occurring brands

Red flag:

- Same competitors appear repeatedly

6. Positioning Audit

“How are you described?”

Look at:

- Descriptions

- Roles

What to check:

- Leader vs alternative vs niche

Red flag:

- Weak or unclear positioning

7. Entity & Association Audit

“Does AI understand your brand?”

Evaluate:

- Category clarity

- Associations

- Use cases

What to check:

- Is your brand clearly defined?

Red flag:

- Confused or inconsistent representation

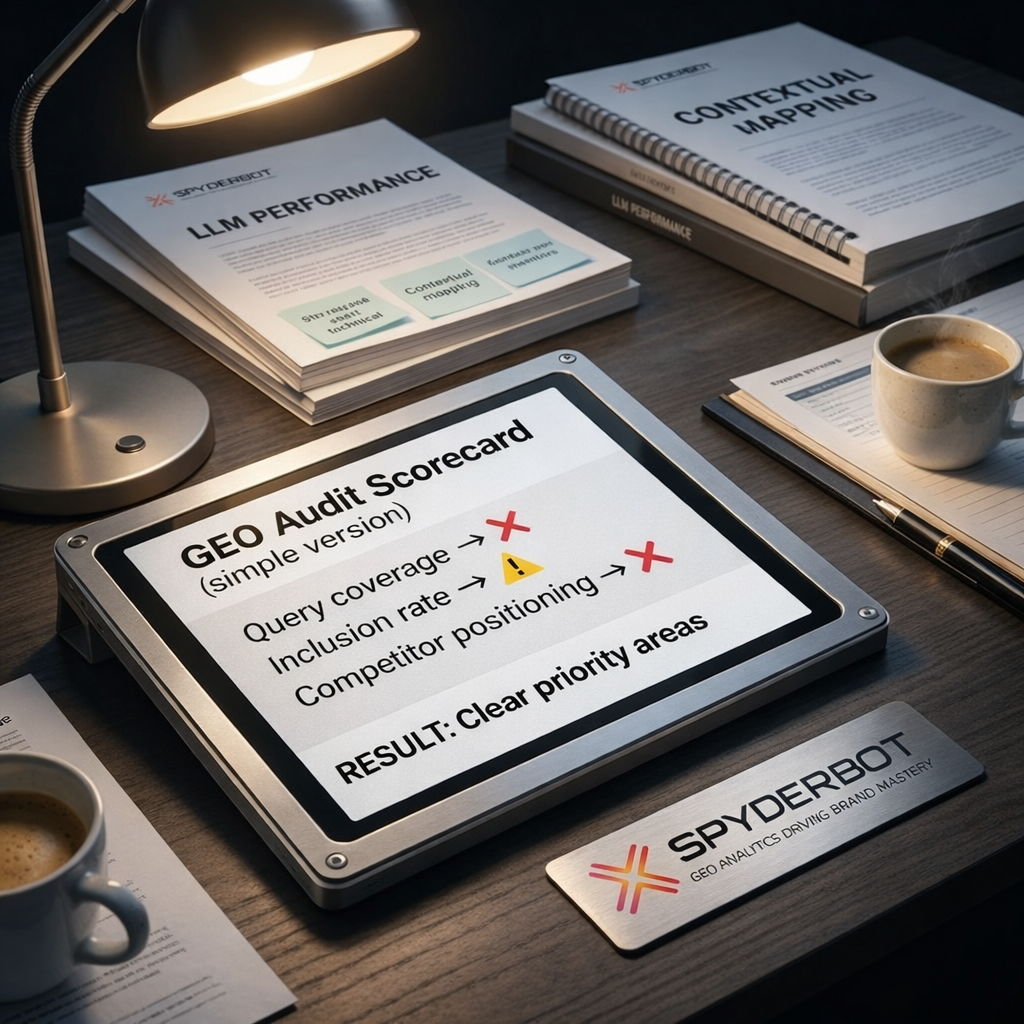

GEO Audit Scorecard (simple version)

You can score each area:

- ✅ Strong

- ⚠️ Moderate

- ❌ Weak

Example:

- Query coverage → ❌

- Inclusion rate → ⚠️

- Competitor positioning → ❌

Result:

Clear priority areas

A realistic audit example

Company situation:

- Strong SEO

- Good content

Audit results:

- Missing in “best tools” queries

- Competitors dominate comparisons

- Weak category alignment

Diagnosis:

- Not a content issue

- A positioning + association issue

Outcome:

Clear direction for optimization

Common mistakes in GEO audits

1. Testing too few prompts

→ Not reliable

2. Using only one AI system

→ Incomplete view

3. Focusing only on mentions

→ Missing context

4. Ignoring competitors

→ No benchmark

5. No structured framework

→ No clarity

Manual audit vs system-driven audit

Manual:

- Limited prompts

- No scale

- No consistency

System-driven:

- Large-scale coverage

- Pattern detection

- Reliable insights

Key insight

Enterprise GEO requires scale

Not manual testing

When should you run a GEO audit?

1. You are not showing in ChatGPT

2. Competitors dominate AI answers

3. You launch a new category

4. You see unexplained pipeline drop

What to do after a GEO audit

1. Fix entity clarity

2. Improve category positioning

3. Expand context coverage

4. Strengthen associations

5. Track and iterate

Final conclusion

A GEO audit gives you:

- Clarity

- Direction

- Prioritization

Final insight

You cannot fix what you don’t understand

GEO audit is the first step to:

Winning in AI search

Tags: AI competitor analysis, AI search audit, AI visibility audit, brand visibility AI, chatgpt brand analysis, chatgpt visibility audit, generative engine optimization, generative engine optimization audit, GEO, GEO analysis, GEO audit, GEO framework, Spyderbot.net